Have you ever wondered how medical practices in 19th century America shaped modern healthcare? This era was marked by significant advancements and profound challenges that influenced public health and treatment methodologies.

In this exploration, you will discover the state of medical knowledge during the 1800s, common practices and treatments used by physicians, and the vital role that hospitals and medical institutions played in society. Understanding these elements is crucial for appreciating how far medicine has come.

We will delve into the evolution of medical understanding, examine prevalent treatments, and highlight the transformation of healthcare facilities throughout the century.

The state of medical knowledge in the 19th century

The 19th century marked a pivotal era in the evolution of medical knowledge in America. During this time, medicine transitioned from traditional practices to more scientific approaches. The prevailing medical theories included humoral theory, which posited that health was maintained by a balance of bodily fluids, and miasma theory, which suggested that diseases were caused by “bad air” or vapors.

One significant advancement in the medical field was the establishment of medical schools. By the mid-1800s, institutions such as Harvard Medical School and Johns Hopkins University began to emphasize rigorous scientific training, which included anatomy, physiology, and pathology. The introduction of formal medical education contributed to the development of a more knowledgeable and skilled medical workforce.

- Humoral Theory: Dominant until the late 19th century, based on the balance of four bodily fluids: blood, phlegm, black bile, and yellow bile.

- Miasma Theory: Explained disease spread through polluted air; led to public health reforms in urban areas.

- Germ Theory: Proposed by Louis Pasteur and Robert Koch towards the end of the century, revolutionizing the understanding of infectious diseases.

Despite these advancements, many medical practices remained rudimentary. Surgical procedures were often performed without anesthesia until the mid-1800s. The introduction of ether and chloroform as anesthetics during the 1840s drastically changed surgical outcomes, allowing for longer and more complex operations.

One notable case involved Dr. John Snow, who is considered a pioneer in epidemiology. In 1854, he investigated a cholera outbreak in London, mapping cases and identifying a contaminated water pump as the source. His work laid the foundation for modern public health practices and significantly impacted the understanding of disease transmission.

Overall, the state of medical knowledge in the 19th century was characterized by a struggle between traditional beliefs and emerging scientific evidence. While significant progress was made, many misconceptions persisted, paving the way for further advancements in the 20th century.

Common medical practices and treatments of the era

The 19th century showcased a diverse range of medical practices and treatments that reflected the evolving understanding of health and disease. While some methods were effective, others were steeped in superstition. Here are some common practices of the time:

- Bloodletting: This was a prevalent practice believed to balance bodily humors. Physicians often used leeches or made incisions to withdraw blood, sometimes removing several pints in a single session. This method was thought to treat various ailments, from fevers to headaches.

- Herbal remedies: Herbal medicine was widely used, drawing on indigenous practices and European traditions. Common herbs included willow bark for pain relief and echinacea for infections. Many families maintained their own herbal gardens to ensure access to these remedies.

- Homeopathy: Founded by Samuel Hahnemann in the late 18th century, homeopathy gained popularity in the 19th century. It operated on the principle of “like cures like,” using highly diluted substances to treat ailments. By 1850, there were over 100 homeopathic medical schools in the United States.

- Surgery: Surgical practices advanced significantly during this period, particularly with the introduction of anesthesia in the 1840s. Ether and chloroform allowed for more complex procedures. For instance, in 1846, dentist William Morton successfully demonstrated ether anesthesia during a tooth extraction, revolutionizing surgical comfort.

Despite these advancements, many treatments were rudimentary. For example, the use of mercury for syphilis treatment was common, despite its toxic side effects. Additionally, the lack of germ theory led to unsanitary practices in hospitals, resulting in high infection rates.

Overall, the 19th century represented a time of experimentation and change. Many practices laid the groundwork for modern medicine, while others served as cautionary tales of the dangers of inadequate medical knowledge. As the century progressed, the need for more scientific approaches became increasingly evident.

The role of hospitals and medical institutions

The 19th century witnessed a significant transformation in the role of hospitals and medical institutions in America. Initially, hospitals were often viewed as places for the poor and destitute, where patients received minimal care. However, as medical knowledge advanced, hospitals began to evolve into centers for scientific treatment and education.

One of the most notable developments was the establishment of teaching hospitals. These institutions were integral to the training of medical professionals, combining clinical practice with academic study. The first teaching hospital in America, the Massachusetts General Hospital, was founded in 1821 and became a model for future institutions.

- Increase in hospital numbers: By the mid-19th century, the number of hospitals in the United States grew from approximately 100 in 1800 to over 1,000 by 1900.

- Professionalization of medicine: The establishment of hospitals led to the formation of organized medical societies, which set standards of practice and education.

- Specialization: As hospitals expanded, they began to focus on specialized care, such as maternity wards and surgical units, further enhancing the quality of medical treatment.

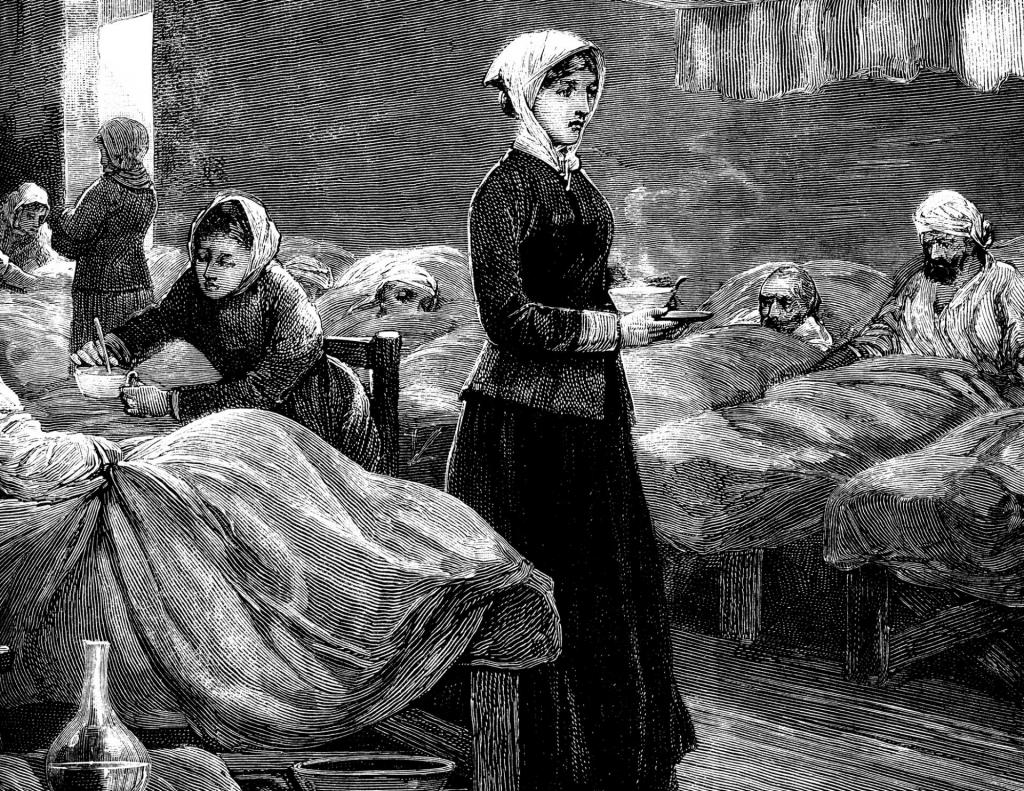

The role of hospitals was further solidified by the contributions of notable figures. Florence Nightingale, during the Crimean War, emphasized the importance of sanitary conditions, which influenced hospital design and patient care in America. Her work highlighted how proper hygiene could drastically reduce infection rates, leading to reforms in hospital practices.

Moreover, hospitals served as a focal point for public health initiatives. They became involved in addressing epidemics such as cholera and smallpox, providing not only treatment but also education on prevention. For instance, the New York City Health Department initiated vaccination programs in the 1850s, significantly reducing smallpox incidence.

The 19th century marked a shift in the perception and function of hospitals in America. From mere shelters for the impoverished, they transformed into vital institutions that played a crucial role in advancing medical practices, education, and public health initiatives.

Influential medical figures and pioneers

The 19th century was home to numerous influential medical figures who played pivotal roles in shaping American medicine. Their contributions not only advanced medical knowledge but also transformed practices in hospitals and clinics across the nation.

One of the most significant figures was Ignaz Semmelweis, who is often referred to as the “father of infection control.” In the 1840s, he discovered that handwashing with chlorinated lime solutions drastically reduced the incidence of puerperal fever among women giving birth. His findings, published in 1847, laid the groundwork for antiseptic procedures.

- Oliver Wendell Holmes Sr.: Introduced the concept of “antisepsis” and emphasized the importance of cleanliness in medical practice.

- Joseph Lister: Although British, his work on antiseptic surgery influenced American practices in the late 19th century, promoting the use of carbolic acid to sterilize surgical instruments.

- John Snow: Known for his pioneering work in epidemiology, Snow’s investigation of a cholera outbreak in London in 1854 helped establish the importance of clean water supply, influencing public health policies.

Another prominent figure was William Osler, often considered the father of modern medicine. His book, “The Principles and Practice of Medicine,” published in 1892, became a standard reference for medical students and practitioners alike, emphasizing the importance of clinical experience and bedside teaching.

In addition to these individuals, Elizabeth Blackwell deserves mention as the first woman to receive a medical degree in the United States in 1849. Her achievements paved the way for future generations of female physicians, expanding the medical profession’s diversity.

Lastly, the work of Samuel Hahnemann, the founder of homeopathy, emerged during this century. His principles, articulated in the early 1800s, introduced alternative treatment methods that challenged conventional medicine, gaining popularity among certain segments of the population.

These figures collectively contributed to a significant evolution in medical practices and education in 19th-century America, paving the way for modern medical advancements.

The impact of epidemics and public health challenges

The 19th century was marked by several devastating epidemics that profoundly influenced public health policies and practices in America. Diseases such as cholera, smallpox, and yellow fever emerged as significant threats, prompting a reevaluation of medical responses and community health initiatives.

Cholera outbreaks, particularly in the 1830s and 1840s, resulted in thousands of deaths. For instance, the 1849 cholera epidemic alone claimed over 15,000 lives in New York City. The disease’s rapid transmission and high mortality rate highlighted the urgent need for improved sanitation and hygiene practices.

- Smallpox: The smallpox vaccination, introduced in the early 19th century, became a crucial public health measure. By the 1850s, vaccination campaigns were implemented in several states, significantly reducing smallpox cases.

- Yellow Fever: The yellow fever epidemics in the 1870s, especially in Memphis, Tennessee, prompted the establishment of the U.S. Marine Hospital Service to address such public health crises.

- Typhoid Fever: Typhoid fever outbreaks also led to increased awareness of water quality and sanitation, influencing the development of municipal water systems.

In response to these public health challenges, various organizations emerged, including the American Public Health Association (APHA), founded in 1872. The APHA aimed to address health issues through education and advocacy, recognizing that community involvement was essential for effective public health strategies.

Moreover, the impact of these epidemics extended beyond immediate health concerns. They fostered a greater understanding of disease transmission and the importance of preventive measures. For example, the work of John Snow during the 1854 cholera outbreak in London, which involved mapping cases to identify contaminated water sources, laid the groundwork for modern epidemiology.

These public health challenges ultimately led to the establishment of more structured health systems, emphasizing disease prevention and health education. By the end of the 19th century, communities began to recognize the critical role of public health in enhancing overall societal well-being.

Advancements in surgery and anesthesia

The 19th century marked a revolutionary period for surgery and anesthesia, transforming the field of medicine in America. Prior to this era, surgical procedures were often brutal and performed without effective pain management, leading to high mortality rates. However, advancements in techniques and the introduction of anesthesia significantly improved patient outcomes.

One of the most notable milestones was the introduction of ether anesthesia in the 1840s. In 1846, dentist William Morton successfully demonstrated ether as an anesthetic agent during a surgery at Massachusetts General Hospital. This marked the first time a patient underwent surgery without the agony of pain, leading to wider acceptance of anesthesia in surgical practices.

- Ether: Widely used after its introduction, allowing for longer and more complex surgeries.

- Chloroform: Introduced shortly after ether, it provided a more potent alternative but with increased risks.

- Local anesthetics: Innovations in local anesthesia, such as the use of cocaine, emerged later, paving the way for less invasive procedures.

In addition to advancements in anesthesia, surgical techniques themselves evolved significantly. Joseph Lister introduced the concept of antisepsis in the 1860s, which involved the use of sterilization techniques to prevent infection. His methods drastically reduced the incidence of post-surgical infections, transforming surgical safety.

Another key figure was Johns Hopkins, whose establishment of the Johns Hopkins Hospital in 1889 emphasized the importance of research and advanced surgical training. The hospital became a model for medical education, emphasizing the integration of surgical practices with scientific research.

Statistics from this period highlight the impact of these advancements: mortality rates for surgeries fell from as high as 50% in the early 19th century to less than 10% by the end of the century. This dramatic decline illustrated the effectiveness of anesthesia and antiseptic techniques in improving surgical outcomes.

Overall, the 19th century was a pivotal time for surgery and anesthesia, laying the groundwork for modern surgical practices. The innovations and pioneers of this era not only changed the way surgery was performed but also significantly improved patient care and safety in medical procedures.

The development of medical education and licensing

The evolution of medical education in 19th century America was characterized by significant changes aimed at improving the quality of healthcare. Initially, medical training was largely informal, with apprenticeships being the primary method for acquiring medical knowledge. However, as the century progressed, formal medical schools began to emerge.

By the mid-1800s, several key institutions were established. For instance, Harvard Medical School, founded in 1782, became a model for others, emphasizing rigorous academic standards. Similarly, Johns Hopkins University, established in 1876, introduced a comprehensive curriculum that included both theoretical and practical training.

- Growth of Medical Schools: In 1800, there were only a handful of medical schools in the United States. By 1900, that number had increased to over 160.

- Standardized Curriculum: The introduction of standardized curricula helped ensure that all medical students received a consistent education, covering essential subjects such as anatomy, physiology, and pathology.

- Licensing Requirements: As the profession evolved, states began to implement licensing examinations to regulate who could practice medicine. This was crucial in establishing professional standards.

In 1847, the American Medical Association (AMA) was formed, advocating for higher standards in medical education and practice. The AMA played a critical role in pushing for the establishment of licensing laws across various states, which helped to eliminate unqualified practitioners from the field.

For example, in 1873, New York became one of the first states to require a medical license, setting a precedent that many other states followed. As a result, by the end of the century, most states mandated that medical practitioners pass rigorous examinations to obtain their licenses, ensuring a higher caliber of care for patients.

Overall, the development of medical education and licensing during the 19th century not only enhanced the training of physicians but also established a foundation for modern medical practice in America. This transformation was vital for ensuring public trust and safety in healthcare.

Traditional remedies versus emerging scientific approaches

In the 19th century, the medical landscape in America was characterized by a significant tension between traditional remedies and emerging scientific approaches. Many practitioners relied on herbal medicine, homeopathy, and other folk remedies, while a growing number of physicians began to embrace the principles of scientific inquiry.

Traditional medicine often included treatments based on long-standing cultural practices. For example, bloodletting was a common method used to treat various ailments, based on the belief that imbalances in bodily humors caused disease. This practice was prevalent until the late 1800s, despite its questionable efficacy.

- Herbal Remedies: These involved using plants and natural substances for healing, such as echinacea for immune support and willow bark for pain relief.

- Homeopathy: Founded by Samuel Hahnemann in the late 18th century, homeopathy gained traction in the 19th century, emphasizing “like cures like” with highly diluted substances.

- Bloodletting: Although declining, this practice remained popular among many physicians until scientific evidence began to refute its benefits.

As the century progressed, advancements in scientific understanding began to challenge these traditional beliefs. The introduction of germ theory by Louis Pasteur and Robert Koch fundamentally changed the perception of disease causation. By the 1880s, this new knowledge prompted a shift towards more rigorous scientific methods in medicine.

For instance, antiseptic techniques introduced by Joseph Lister in the 1860s significantly reduced infection rates in surgical procedures. The adoption of these methods demonstrated the importance of cleanliness and sterilization, moving away from outdated practices.

Moreover, the establishment of medical schools and the implementation of standardized training programs in the late 19th century helped to elevate the status of medicine. Institutions such as Johns Hopkins University, founded in 1876, emphasized scientific research and clinical practice, creating a new generation of physicians who prioritized evidence-based approaches.

| Aspect | Traditional Remedies | Scientific Approaches |

|---|---|---|

| Basis | Folk knowledge and cultural practices | Scientific research and evidence |

| Common Practices | Herbal medicine, bloodletting | Antiseptics, vaccinations |

| Outcome | Variable effectiveness | Improved patient outcomes |

The legacy of 19th century medicine in modern healthcare

The 19th century laid the groundwork for many practices and principles that continue to influence modern healthcare. One of the most significant legacies is the establishment of rigorous medical education and training. This period saw the formation of medical schools, such as the University of Pennsylvania School of Medicine in 1765, which became a model for future institutions. The focus on scientific methods and clinical practice in these schools helped create a new generation of well-trained physicians.

Another critical advancement was the introduction of anesthesia, which revolutionized surgical procedures. The first public demonstration of ether anesthesia occurred in 1846 at Massachusetts General Hospital, performed by William Morton. This innovation not only alleviated patient suffering but also paved the way for more complex and invasive surgeries, significantly reducing mortality rates in the operating room.

- Standardization of medical practices: The 19th century emphasized the need for standardized procedures and protocols, leading to improved patient outcomes.

- Licensing and regulation: The introduction of licensing for practitioners ensured that only qualified individuals could practice medicine, enhancing public trust in healthcare.

- Emergence of specialties: As medicine progressed, various specialties began to form, such as surgery, obstetrics, and psychiatry, which are foundational to modern healthcare systems.

Moreover, the era witnessed the rise of public health initiatives, driven by a growing awareness of sanitation and disease prevention. The Sanitary Commission, established during the Civil War, highlighted the importance of hygiene and led to improvements in hospital care and community health standards. These principles remain vital in contemporary public health strategies.

For instance, the establishment of the Centers for Disease Control and Prevention (CDC) in 1946 can be traced back to these early public health movements. The CDC continues to play a crucial role in monitoring disease outbreaks and promoting health education, reflecting the enduring impact of 19th-century medical advancements.

The legacy of 19th-century medicine is evident in the frameworks of modern healthcare, from education and regulation to public health initiatives. The innovations of this period not only improved medical practices but also shaped the evolution of patient care standards we rely on today.

Frequently Asked Questions

What were the main causes of medical advancements in 19th century America?

The main causes of medical advancements included scientific discoveries, improved medical education, and a growing public demand for better healthcare. These factors led to the establishment of hospitals and training programs that enhanced the quality of medical practice.

How did traditional remedies influence 19th century medicine?

Traditional remedies played a significant role in shaping 19th century medicine by providing a foundation for early practices. Many physicians integrated herbal treatments and folk medicine into their practices while gradually transitioning to more scientific approaches, leading to a blend of old and new methodologies.

What was the impact of medical licensing reforms during this period?

Medical licensing reforms aimed to regulate the practice of medicine and improve standards. These reforms helped to establish credentialing systems that ensured only qualified individuals could practice, thereby enhancing public trust in healthcare professionals and services.

How did 19th century medicine contribute to modern healthcare?

19th century medicine laid the foundational principles for modern healthcare, including patient care standards and hospital organization. This era introduced evidence-based practices that continue to influence contemporary medical training and healthcare delivery systems.

What role did public health movements play in 19th century medicine?

Public health movements were crucial in addressing widespread health issues, such as epidemics and sanitation. These movements promoted awareness and led to reforms in health policies, ultimately improving community health and shaping future public health initiatives.

Conclusion

The 19th century marked a pivotal period in American medicine, characterized by the advancement of medical education and licensing, the clash between traditional remedies and scientific approaches, and the establishment of practices that resonate in modern healthcare. These developments laid a strong foundation for today’s medical landscape. By understanding the evolution of medicine, readers can appreciate the historical context of current healthcare practices. This knowledge empowers individuals to make informed decisions regarding their health and advocate for evidence-based treatments in their communities. Explore further by engaging with local medical history, attending lectures, or reading more about the transformative journey of medicine in America. Your active participation can foster a deeper understanding of healthcare today.