Have you ever considered how the 19th century revolutionized medicine? This period was marked by groundbreaking discoveries that transformed surgical practices and disease management, fundamentally altering the course of healthcare.

In this article, you will learn about the pivotal advancements in anesthesia, the emergence of microbiology and germ theory, and the development of vaccines that shaped modern medicine. Understanding these innovations is crucial for appreciating the medical landscape we navigate today.

We will explore the profound impact of these discoveries, detailing how they improved surgical outcomes, enhanced infection control, and led to the widespread adoption of immunization techniques.

The impact of anesthesia on surgical procedures

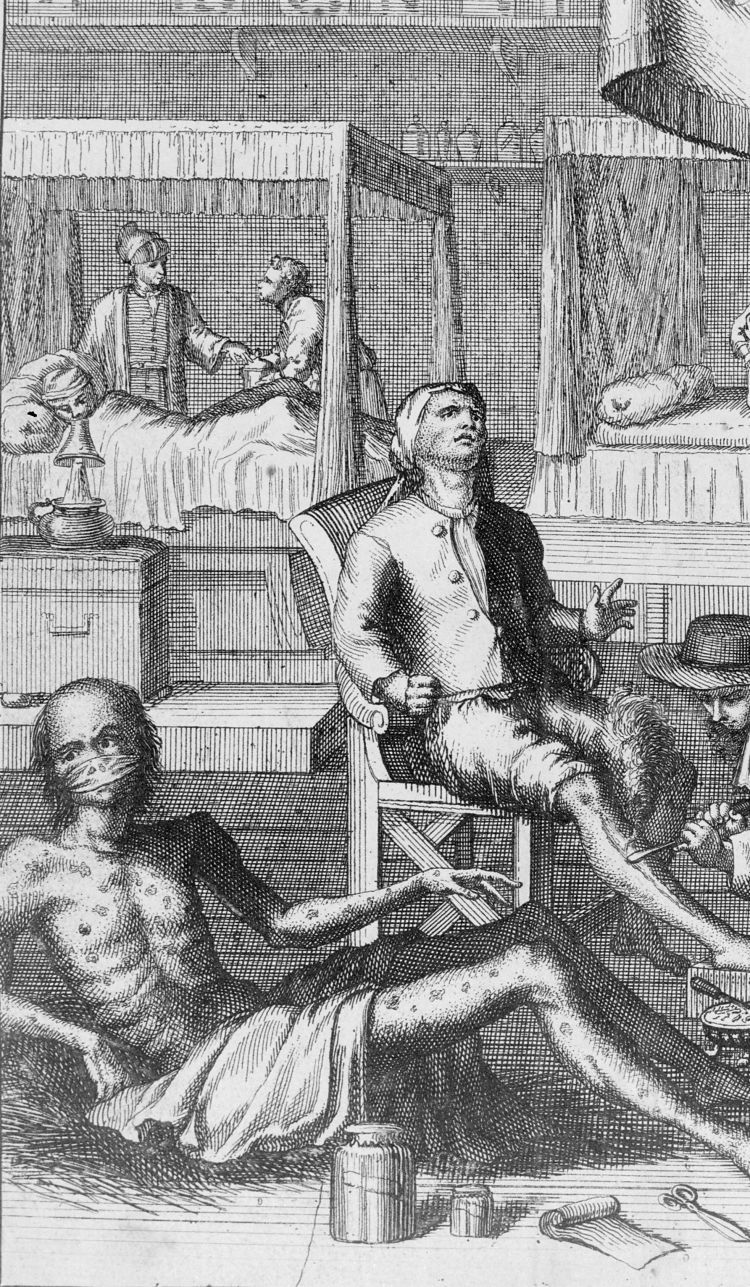

The introduction of anesthesia in the 19th century revolutionized surgical practices, dramatically altering the landscape of medicine. Prior to its use, surgical operations were often brutal and excruciatingly painful, deterring patients from seeking necessary treatments. The advent of anesthesia not only alleviated pain but also enabled surgeons to perform more complex procedures with greater precision.

One of the pioneering moments in anesthesia occurred in 1846 when William Morton, a dentist, successfully demonstrated the use of ether during a surgery at Massachusetts General Hospital. This groundbreaking event marked the beginning of modern anesthesia and set a precedent for future surgical interventions.

- Ether: First widely used anesthetic, allowing for longer surgeries.

- Chloroform: Introduced shortly after ether, it became popular for its potency.

- Nitrous oxide: Used for dental procedures, it provided a lighter sedation option.

The impact of these anesthetics was profound. Surgeons could now focus on the operation itself rather than the patient’s pain response. For instance, in 1853, James Simpson introduced chloroform as an anesthetic for childbirth, leading to a significant reduction in maternal suffering during labor. This application showcased how anesthesia could transform not only surgical procedures but also obstetrics.

Moreover, the use of anesthesia paved the way for more intricate surgeries. As surgeons gained confidence in their ability to perform lengthy operations without causing pain, they began to explore previously unthinkable procedures. For example, the first successful appendectomy was performed in 1889 under anesthesia, a procedure that remains a common surgical intervention today.

The introduction of anesthesia fundamentally changed surgical procedures in the 19th century. It eliminated the fear of pain, allowing patients to undergo necessary operations and ultimately saving countless lives. The legacy of this innovation continues to shape modern medicine, demonstrating the enduring importance of advancements in anesthesia.

Advancements in microbiology and germ theory

The 19th century marked a pivotal shift in the understanding of infectious diseases, primarily due to advancements in microbiology and the formulation of germ theory. This transformation was spearheaded by several key figures whose contributions laid the groundwork for modern medicine.

One of the most significant figures was Louis Pasteur, whose experiments in the 1860s disproved the theory of spontaneous generation. He demonstrated that microorganisms were responsible for fermentation and spoilage, leading to the development of pasteurization. This process not only improved food safety but also paved the way for advances in sterilization techniques in medical settings.

- Robert Koch is another critical contributor, known for his work on the causative agents of tuberculosis and anthrax. In 1882, he identified the Mycobacterium tuberculosis, establishing the first bacteriological foundation for the diagnosis of infectious diseases.

- The introduction of Koch’s Postulates in 1890 provided a systematic method for linking specific pathogens to specific diseases. This was crucial in validating the germ theory.

- Joseph Lister applied these principles to surgery, introducing antiseptic techniques in the operating room. His work significantly reduced postoperative infections, thereby increasing the survival rate of surgical patients.

By the late 19th century, the acceptance of germ theory transformed medical practices. Hospitals began to implement sterilization protocols, and hand hygiene became a standard practice among healthcare professionals. For example, by 1880, the mortality rate from surgical infections had decreased from over 40% to less than 10% in some institutions due to these advancements.

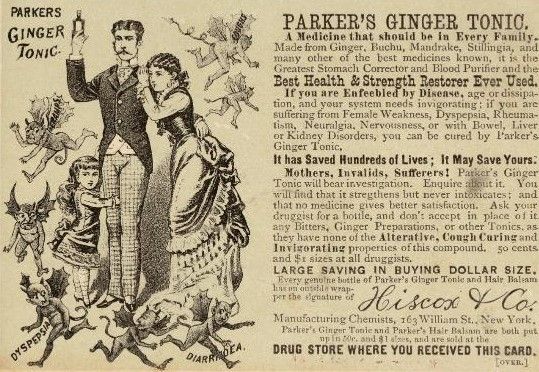

Furthermore, the establishment of microbiology as a distinct scientific discipline led to significant public health improvements. Vaccination programs were developed, including the smallpox vaccine’s widespread use, which drastically reduced mortality rates. The advancements made during this era set the stage for future breakthroughs in medicine, emphasizing the importance of understanding microorganisms in disease prevention.

The development of vaccines and immunization

The 19th century witnessed significant advancements in the field of vaccines and immunization, laying the groundwork for modern preventive medicine. The journey began with Edward Jenner, who, in 1796, developed the first successful smallpox vaccine. Jenner’s innovative approach involved using material from cowpox lesions, which provided immunity against smallpox without causing the disease itself.

As the century progressed, the understanding of vaccination expanded. By the 1880s, Louis Pasteur had made groundbreaking contributions to immunology. He developed vaccines for anthrax and rabies, demonstrating that weakened forms of pathogens could stimulate protective immune responses. Pasteur’s work not only solidified the concept of vaccination but also established the foundation for the germ theory of disease.

- Edward Jenner: Introduced smallpox vaccine in 1796.

- Louis Pasteur: Developed vaccines for anthrax (1881) and rabies (1885).

- Emil von Behring: Created the diphtheria antitoxin in 1890, showcasing the potential of serum therapy.

These developments led to a surge in public health initiatives aimed at controlling infectious diseases. For instance, the establishment of vaccination programs became common in many countries. By the late 1800s, mass vaccination campaigns were implemented to combat outbreaks, significantly reducing mortality rates from diseases like smallpox and diphtheria.

A notable example is the smallpox vaccination campaign initiated in the United Kingdom, which began in 1853. This program mandated vaccination for infants, resulting in a dramatic decrease in smallpox incidence. By the early 20th century, smallpox was on the verge of eradication in many parts of the world.

The development of vaccines and immunization during the 19th century not only advanced medical science but also transformed public health. The principles established during this era continue to influence vaccination strategies today, underscoring the importance of preventive medicine in combating infectious diseases.

Breakthroughs in medical imaging techniques

The 19th century was a pivotal time for medical imaging, leading to groundbreaking discoveries that enhanced diagnostic capabilities. One of the first significant advancements was the invention of the X-ray by Wilhelm Conrad Röntgen in 1895. This discovery allowed physicians to visualize the internal structures of the human body without invasive procedures.

Röntgen’s initial experiments revealed the potential of X-rays, as he successfully captured images of bones and other dense tissues. This innovation marked the beginning of a new era in medicine, where complex fractures and foreign objects could be diagnosed with unprecedented accuracy.

- X-ray technology became widely adopted in hospitals by the early 20th century.

- It proved essential in the diagnosis of conditions such as pneumonia by allowing visualization of the lungs.

- Röntgen received the first Nobel Prize in Physics in 1901 for this transformative discovery.

Following the advent of X-rays, significant progress was made in other imaging techniques. The introduction of ultrasound in the late 19th century, although initially used in industrial applications, gradually found its way into the medical field. By the 1950s, ultrasound imaging became a standard tool for monitoring pregnancies, providing real-time images of the fetus.

Another groundbreaking development was the invention of the CT scan (Computed Tomography) in the 1970s, which combined X-ray technology with computer processing. This technique allowed for the creation of detailed cross-sectional images of the body, enhancing the diagnostic abilities of physicians.

In the 1980s, the MRI (Magnetic Resonance Imaging) emerged as a powerful imaging technique using magnetic fields and radio waves. Unlike X-rays and CT scans, MRI does not involve ionizing radiation, making it a safer alternative for patients. This method revolutionized the diagnosis of soft tissue injuries, brain tumors, and spinal disorders.

These breakthroughs in medical imaging have not only improved the accuracy of diagnoses but have also paved the way for advanced treatment planning and monitoring. As a result, the field of medicine has progressed significantly, enabling healthcare professionals to provide better patient care through enhanced visualization techniques.

The rise of public health and sanitation

The 19th century saw a significant transformation in public health and sanitation, driven by the growing awareness of the impact of environmental factors on health. The Industrial Revolution brought urbanization, which often resulted in overcrowded cities with inadequate sanitation facilities.

The connection between poor sanitation and disease became increasingly evident. For instance, the outbreaks of cholera in London during the 1850s highlighted the urgent need for public health reforms. According to historical records, the 1854 outbreak resulted in over 600 fatalities in just a few days.

- John Snow, a pioneering physician, conducted a groundbreaking study during this outbreak. His work led to the identification of contaminated water sources as a primary cause of cholera transmission.

- The establishment of the Public Health Act of 1848 in England marked a crucial step in reforming urban sanitation. This act aimed to address issues like waste disposal, clean water supply, and the establishment of local health boards.

- By the end of the century, cities like Paris and London implemented extensive sewer systems, dramatically improving public health conditions.

Another significant figure in this movement was Florence Nightingale, whose work during the Crimean War emphasized the importance of sanitary conditions in hospitals. She advocated for proper ventilation, cleanliness, and nutrition, which significantly reduced the mortality rate among soldiers.

Furthermore, the establishment of various sanitary commissions and boards in many countries contributed to the rise of public health initiatives. These organizations focused on conducting epidemiological studies and promoting hygiene education among the population. For example, in 1875, the Sanitary Act was implemented in England, enforcing regulations to ensure cleaner living conditions.

As a result of these efforts, the late 19th century witnessed a remarkable decline in infectious diseases. The death rate from cholera, for instance, fell dramatically, from 16.6 deaths per 1,000 people in the 1850s to less than 1.0 by the 1900s in many urban areas. This decline highlighted the critical role of sanitation and public health initiatives in enhancing life expectancy and quality of life.

Innovations in surgical instruments and techniques

The 19th century marked a transformative era in surgical practices, characterized by remarkable innovations in surgical instruments and techniques. These advancements greatly improved patient outcomes and reduced surgical mortality rates.

One of the most significant developments was the introduction of anesthesia, which revolutionized surgery. Prior to its use, patients endured excruciating pain during procedures, often leading to shock or death. In 1846, the first successful public demonstration of ether anesthesia by Dr. William Morton showcased its potential, paving the way for its widespread adoption.

- Surgical Scissors and Forceps: Instruments like the metzenbaum scissors and hemostatic forceps were refined, allowing surgeons to perform more intricate procedures with precision.

- Amputating Saws: The development of the gigli saw and the introduction of the chain saw improved the efficiency of amputations, drastically reducing the time required for such procedures.

- Needles and Sutures: The creation of curved needles and various suture materials advanced wound closure techniques, enhancing healing and reducing complications.

In addition to instrument innovations, surgical techniques also advanced significantly. The practice of aseptic surgery emerged, championed by figures like Joseph Lister. His promotion of antiseptic methods in the 1860s drastically reduced infection rates, further improving surgical outcomes.

For example, Lister’s use of carbolic acid as a disinfectant significantly decreased postoperative infections. By the end of the 19th century, hospitals began to adopt aseptic techniques, resulting in a marked decline in surgical mortality.

Furthermore, the establishment of specialized surgical fields emerged during this period. Notable figures, such as Dr. Pierre Fauchard, often referred to as the father of modern dentistry, contributed to specialized techniques and tools in oral surgery. His work laid the foundation for advancements in dental surgery, highlighting the importance of specialization in surgical practices.

Overall, the innovations in surgical instruments and techniques during the 19th century laid a critical foundation for modern surgery. The combination of improved tools, the introduction of anesthesia, and the adoption of antiseptic practices significantly enhanced surgical efficacy and patient safety.

The discovery of antibiotics and their significance

The discovery of antibiotics in the late 19th century revolutionized medicine, dramatically reducing mortality rates from bacterial infections. The most notable early antibiotic was penicillin, discovered by Alexander Fleming in 1928, although its widespread use began after World War II. This breakthrough marked a turning point in the treatment of infectious diseases.

Prior to antibiotics, common bacterial infections often resulted in severe complications or death. The significant impact of antibiotics can be summarized through key milestones:

- Penicillin: Discovered in 1928, but not mass-produced until the early 1940s, it was instrumental in treating wounded soldiers during WWII.

- Tetracycline: Introduced in the 1940s, it expanded the range of treatable infections, including acne and respiratory diseases.

- Streptomycin: Discovered in 1943, it was the first effective treatment for tuberculosis, significantly reducing morbidity rates.

The significance of antibiotics can be understood through various factors:

- Reduction in mortality rates: Prior to antibiotics, diseases like pneumonia and syphilis had high fatality rates. For instance, pneumonia mortality dropped from 30% to less than 5% after the introduction of penicillin.

- Advancements in surgery: With the ability to prevent and treat infections, surgical procedures became safer, leading to more complex and life-saving operations.

- Public health improvements: The control of infectious diseases through antibiotics contributed to longer life expectancy and improved overall health standards.

Fleming’s discovery laid the groundwork for further antibiotic research, leading to the development of various classes of antibiotics. These medications have transformed the landscape of medicine and continue to play a crucial role in global health. As of today, antibiotics are among the most commonly prescribed medications, highlighting their lasting significance in medical practice.

The role of medical education reforms in the 19th century

Throughout the 19th century, significant reforms in medical education played a crucial role in shaping the future of healthcare. These reforms were driven by the need for improved training standards and the recognition of the importance of scientific knowledge in medical practice.

Prior to the 19th century, medical education was often informal and lacked a standardized curriculum. However, a growing emphasis on empirical research and clinical practice led to the establishment of formal medical schools. In 1800, there were only a handful of medical schools in Europe and North America, but by 1900, this number had increased significantly.

- Johns Hopkins University founded its medical school in 1893, introducing a model of education that emphasized research and practical training.

- Harvard Medical School revamped its curriculum in the mid-19th century, incorporating laboratory work and clinical experience into its programs.

- European institutions, such as the University of Berlin, began to prioritize the teaching of pathology and anatomy, reflecting a shift towards scientific methodologies.

One of the most notable figures in this reform movement was William Osler, who became a professor at Johns Hopkins in 1889. Osler advocated for bedside teaching, emphasizing the importance of direct patient interaction for medical students. His methods significantly influenced future generations of physicians.

As medical education evolved, the introduction of licensing examinations became a pivotal aspect. In 1858, the General Medical Council was established in the UK, requiring all medical practitioners to pass a rigorous examination to practice medicine legally. This was a crucial step towards ensuring that only qualified individuals entered the medical profession.

| Year | Institution | Key Reform |

|---|---|---|

| 1800 | Various | Limited formal medical education |

| 1893 | Johns Hopkins University | Established a research-focused medical school |

| 1858 | General Medical Council | Implementation of licensing examinations |

| 1860s | Harvard Medical School | Curriculum overhaul to include clinical training |

These reforms not only elevated the standards of medical education but also contributed to the professionalization of medicine. By the end of the century, a new generation of well-trained physicians emerged, equipped with the skills and knowledge necessary to address the complex health issues of the time.

Contributions of key figures in 19th-century medicine

The 19th century was marked by the contributions of several key figures whose work fundamentally changed the landscape of medicine. These individuals not only advanced medical knowledge but also improved patient care through innovative practices.

Louis Pasteur, a French chemist and microbiologist, is renowned for his germ theory of disease. His experiments in the 1860s demonstrated that microorganisms cause fermentation and disease. This discovery led to the development of pasteurization, a method still used today to eliminate pathogens in food and beverages.

- Florence Nightingale: Pioneered modern nursing practices and emphasized sanitation in hospitals, significantly reducing mortality rates.

- Joseph Lister: Introduced antiseptic techniques in surgery, drastically lowering infection rates by advocating for cleanliness and sterilization.

- Ignaz Semmelweis: Demonstrated the importance of handwashing in preventing puerperal fever, saving countless lives in maternity wards.

Another influential figure was Charles Darwin, whose theory of evolution challenged existing medical paradigms. Though primarily known for his work in biology, Darwin’s ideas influenced medical professionals to consider the evolutionary aspects of human health and disease, shaping future research.

Moreover, William Osler, often referred to as the “father of modern medicine,” was instrumental in reforming medical education. His 1892 textbook, “The Principles and Practice of Medicine,” became a cornerstone in medical training and emphasized the importance of clinical practice alongside theoretical knowledge.

These contributions laid the foundation for contemporary medicine. The impact of these key figures can still be felt today, as their principles continue to guide medical practices and education. Their legacies are a testament to the transformative power of innovation and dedication in the field of healthcare.

Frequently Asked Questions

What were the major advancements in medical technology during the 19th century?

Significant advancements in medical technology during the 19th century included the invention of the stethoscope, improvements in surgical instruments, and the development of anesthesia. These innovations greatly enhanced the precision and comfort of medical procedures.

How did the discovery of anesthesia impact surgeries?

The discovery of anesthesia transformed surgical practices by allowing patients to undergo procedures without pain. This breakthrough led to more complex surgeries being performed safely, significantly reducing the risks associated with surgical interventions.

What role did public health reforms play in the 19th century?

Public health reforms in the 19th century focused on improving sanitation, reducing disease transmission, and promoting hygiene. These initiatives included the establishment of health boards and the implementation of vaccination programs, which collectively enhanced community health outcomes.

Who were some influential women in 19th-century medicine?

Influential women in 19th-century medicine included Florence Nightingale, who pioneered nursing practices, and Elizabeth Blackwell, the first woman to receive a medical degree in the United States. Their contributions helped shape the role of women in healthcare.

What were the challenges faced by medical professionals in the 19th century?

Medical professionals in the 19th century faced challenges such as limited access to education, resistance to new medical practices, and a lack of standardized training. These hurdles often hindered the advancement of medical knowledge and patient care.

Conclusion

The 19th century was pivotal in medicine, marked by the groundbreaking discovery of antibiotics that transformed treatment options, significant reforms in medical education that improved healthcare quality, and key contributions from influential figures who reshaped medical practices. Together, these advancements laid the foundation for modern medicine. By understanding these historical developments, readers can appreciate the evolution of healthcare and recognize the importance of ongoing medical education and innovation in improving patient outcomes. This knowledge empowers individuals to advocate for better healthcare practices today. To further explore the impact of these medical discoveries, consider engaging in community health initiatives or participating in educational programs that promote awareness of historical and modern healthcare advancements.